Lines of Code Are Dead. Tokens Aren't the Answer Either.

The board wants GenAI wins. Not exploration. Not ideation. Wins. They want competitive advantage, visible progress, and proof they are not sleepwalking through the biggest shift since the early internet.

C-suites need momentum on a slide. By next quarter. The pressure is legitimate. Show that the money spent on ChatGPT licenses and vendor briefings is producing value.

So leadership measures what they can see.

Spoiler: success is not what they are measuring.

The Celebrated Metrics

"AI adoption is soaring. Ten thousand prompts this month."

Sales, marketing, operations, support. Everyone's using it. Usage graphs are climbing. The quarterly deck looks impressive. Even the CFO is using ChatGPT to better understand the growing GPT invoice.

"Our top performer needed increased token allocation. Leading the charge!"

Developer X is all-in on GenAI. Burning through tokens faster than anyone on the team. Clearly embracing the future. Worthy of recognition. Finance approved the budget increase to keep the momentum going.

"Technical excellence: Engineer running 30+ MCP servers simultaneously. Mastering the tools!"

Model Context Protocol servers for everything. Documentation, APIs, databases, code repos, internal wikis; comprehensive coverage. The setup is impressive. The team lead is documenting it as a best practice for others to follow.

What’s Actually Being Measured

What do these have in common? They’re all measuring activity. None of them measure outcomes.

Welcome to Metrics Theater. The sequel to Success Theater: we built demos that looked good but didn’t survive production. Now we’re measuring numbers that look good but don’t measure value.

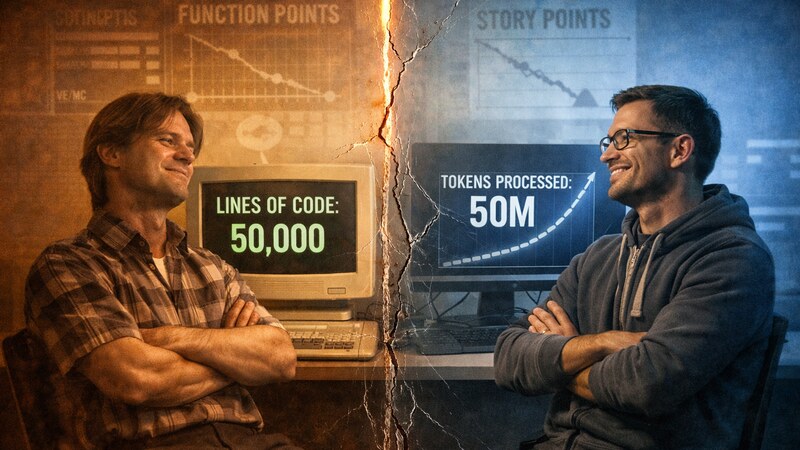

Lines of code didn’t measure quality twenty years ago. We learned that lesson the hard way: more code meant more bugs, more maintenance debt, more complexity. Volume was a vanity metric. The industry moved on.

All of this has happened before, and all of this is happening again.

Prompt counts, token consumption, MCP server proliferation. Different wrappers, same delusion. We’re celebrating activity and calling it progress. We’re rewarding consumption and calling it performance. We’re documenting waste and calling it best practice.

Let’s Dismantle This

The Activity Trap

Ten thousand prompts in a month. Fourteen hundred hours of employee time. Sounds impressive until you ask one question: how many of those outputs were actually used?

Prompt counts measure how many times someone asked an LLM something. They don’t measure whether the answer was useful, whether it accelerated work, or whether it went anywhere at all. A thousand questions that produce zero usable answers is just expensive noise.

Activity has never equaled productivity. We knew this. Somehow we forgot.

The Complexity Trap

Running 30+ MCP servers simultaneously isn’t mastery. It’s complexity worship.

You know the type. Seven layers of abstraction where two would do. Microservices for a CRUD app. It looks sophisticated. It sounds impressive in architecture reviews. And when that engineer presented this setup, nobody in leadership asked what problem 30 servers solved that 3 couldn’t.

Here’s what that sprawl actually creates: context windows drowning in redundant information, premature context rot as signal dies in noise, token burn at conversation startup before any work begins. Every server adds overhead. Every source adds noise.

The pragmatic engineer running 3 carefully chosen MCP servers, getting clean context and shipping quality code? Invisible. The inefficient one burning 5x the tokens for equivalent results? Documented as best practice.

MCP isn’t the problem. Sprawl is the problem. And leadership just institutionalized it.

The Consumption Trap

This is where the threads converge. Token usage is up 300%. Your highest consumer needed increased allocation; clearly leading the charge on AI adoption. Budget approved. Recognition given. The quarterly deck shows the upward trend.

But nobody asked why. Nobody asked what you got for it. You have the report. You know who your top token consumers are. You celebrated them. You increased their budgets. You never asked what they produced.

High token consumption might mean poorly designed prompts stuffing unnecessary context. It might mean inefficient workflows repeating failed attempts. It might mean context bloat drowning signal in noise. It might mean plausible-sounding nonsense that got discarded after burning tokens. It might mean burning money on garbage outputs nobody used.

You didn’t measure cost per outcome. You didn’t ask if they’re shipping quality code efficiently or just burning cloud budget theatrically.

You saw a big number and handed out the trophy. What the fuck are we actually doing?

The CFO isn’t using ChatGPT to understand the invoice because you’re winning. The CFO is using ChatGPT because the numbers don’t make sense and nobody can explain what you got for them. Finance didn’t approve the budget increase because your top consumer is creating value. Finance approved it because leadership said “this is important” and finance doesn’t have the technical depth to push back.

You rewarded your least efficient developers. And now every other engineer sees what gets celebrated: token burn gets visibility, complexity gets rewarded, efficiency gets ignored.

Three metrics. Three traps. One pattern: rewarding visibility over value.

We spent twenty years teaching the industry that volume is a vanity metric!!!

I’m frustrated because this is predictable.

Pattern Recognition

We’ve been here before. Lines of code. Function points. Velocity without context. Sprint completion rates that meant nothing. The industry has a long history of measuring the wrong things, realizing it, and correcting course.

Now With More Cost.

Board pressure is legitimate. The question was never whether to measure GenAI usage. It’s what to measure. And right now, you’re reaching for metrics that feel familiar but don’t fit.

Here’s the thing about those twenty years of learning: engineers learned that lesson. The debates about LOC happened in code reviews, methodology arguments, engineering retrospectives. The industry moved on because engineers stopped using volume as a proxy for quality.

But most execs weren’t in those rooms. From their vantage point, activity metrics looked like progress. And progress translates to momentum in the boardroom.

So when GenAI arrived and the board wanted metrics, you reached for familiar shapes. Token counts feel like lines of code. Usage graphs feel like adoption curves. Big numbers feel like progress. The pattern match wasn’t malice or laziness. It was reaching for tools that looked right from a distance.

But GenAI doesn’t map cleanly to those shapes. Volume and value are decoupled. High consumption can mean high waste just as easily as high productivity. The metrics that look right are measuring the wrong thing.

Here’s the feedback loop you’ve created: celebrate activity metrics, and engineers optimize for activity. Token consumption goes up. Prompt counts climb. The numbers look good, so you report progress. But the underlying value never moved. Outcomes, efficiency, velocity stayed flat.

The incentive structure is the problem. Reward consumption, get consumption. Reward outcomes, get outcomes. Right now, you’re rewarding the wrong thing.

What Actually Matters

If prompts, tokens, and complexity don’t measure success, what does?

Stop measuring how much GenAI your organization consumes. Start measuring what you got for it.

Adoption Rate

Has GenAI been absorbed into actual workflows, or does it remain adjacent to the real work?

Look for workflow displacement, not just usage:

- Did time-to-completion decrease, or are you just doing more steps now?

- Are cycle times decreasing (code reviews, documentation, debugging)?

- Is GenAI in the critical path, or is it a side tool people use occasionally?

The test: If you turned off GenAI tomorrow, would velocity drop significantly, or would work continue largely unchanged? If the answer is “largely unchanged,” you don’t have adoption, you have activity.

Cost Per Outcome

What did you spend per successful business result? Developer A burns 500K tokens and ships three features. Developer B burns 50K tokens and ships three features. One of them understands efficiency. The other one got a participation trophy.

Time Saved

Measurable reduction in task completion time compared to manual process. If your “AI-accelerated” workflow takes the same amount of time as the old process, you didn’t accelerate anything. You added complexity and a cloud bill.

Quality Improvement

Fewer errors, higher accuracy, better outcomes compared to baseline. GenAI that produces work requiring the same level of human review and correction as the manual process isn’t creating value. It’s creating busywork.

Business Impact

Which specific KPI moved because of this GenAI initiative? Revenue up? Costs down? Customer satisfaction improved? Deployment time reduced? If you can’t connect the GenAI spend to a business metric that matters to the board, you’re not showing wins, you’re showing activity.

These aren’t aspirational metrics. They’re the minimum bar for knowing whether GenAI is creating value or consuming budget.

Direct Message to the Executive

Board pressure is real. You need to show GenAI wins. But manufacturing metrics that look good in quarterly decks while actual value stays flat isn’t strategy. It’s borrowed time until someone asks the ROI question you can’t answer.

Not because GenAI failed. Because you measured theater instead of outcomes.

Start with the metrics that matter. Adoption rate. Cost per outcome. Time saved. Quality improvement. Business impact. Put these in your next review.

Ask the uncomfortable questions. What did we actually get for this spend? Who’s shipping value efficiently versus burning tokens theatrically?

You’ll know you’re measuring the right things when the numbers make you uncomfortable. When workflow displacement reveals that most manual processes are still running in parallel. When cost per outcome shows who’s efficient and who’s burning budget. When time saved demonstrates that most workflows haven’t actually accelerated. That discomfort is data. Don’t smooth it over. Use it.

Champion the engineers who understand cost per outcome. The ones who push back when big numbers get celebrated. The ones whose instinct is “what did we get for it?” rather than “look how much we’re using.” They exist. They’ve been doing the work while the metrics theater played out around them.

Reward outcomes, not consumption. Measure adoption, not generation. Celebrate efficiency, not complexity.

The board wants GenAI wins. Give them real ones.

Measure like an engineer. Report like an executive. Stop confusing the two.

// Pragmatic GenAI. Where vanity metrics go to die.