You're Not Adopting GenAI. You're Coping With It.

You deployed probabilistic systems with deterministic playbooks. Now teams cope, not adopt. The paradigm shift you mandated but never owned. Warning: may contain traces of actual engineering.

I recently spent real time on a colleague’s pull request. My reviews tend to be thorough, methodical, and tempered with mentorship. I lean toward hints at what could be improved rather than prescriptive fixes. Over the years I’ve found this approach far more effective for developing engineers who solve problems rather than engineers who follow instructions.

This review was no different. I traced logic through services, questioned why a new dependency existed when the project already had a wrapper for the same thing, and wrote feedback meant to teach. In my own words. Because I take pride in our team’s success rate, our low bug frequency, and I respect my colleague enough to invest real effort.

The code was GenAI-driven. You could tell. Not because the code was bad. Because the code was generic. Existing project patterns, ignored. A dependency we already had a wrapper for, reintroduced. The model defaulted to training-data assumptions about how things should work rather than how things actually work in our codebase, with our business context, under our constraints. A well-prompted model can evaluate existing patterns. This one was not asked to.

A conversation for another day. What matters here is what happened next.

The response to my review came back in minutes. Three structured paragraphs. Each one referenced specific points from my feedback. Acknowledged the architectural concern. Noted the dependency overlap. Closed with something along the lines of, “I’d be eager to better understand the established patterns here so I can align more closely going forward.”

Sounds like comprehension. Like someone absorbing feedback and signaling growth. Exactly the kind of response a senior engineer hopes to get after investing real effort in a review.

I read enough LLM output daily to recognize the patterns. Authentic feedback went in. A cold LLM response came back. The signal was there, but the wrong signal was sent.

Here’s the thing about plausible-sounding nonsense. The person sending the message thinks the message lands. They read the response back, see structure and specificity, and feel confident they’ve responded adequately. The LLM flatters the sender. Always does.

"Thank you for the detailed review. You raise a valid point about the dependency overlap; I can see how consolidating would improve maintainability. The architectural concern around the service boundary is well-taken and I'll revisit the approach. I'd be eager to better understand the established patterns here so I can align more closely going forward."

"I pasted your feedback into the model and received something resembling comprehension. I didn't engage with any of this. I don't know why we have the abstractions we have. But the response looks professional enough to close the loop."

"Good catch on the dependency, honestly didnt realize we had a wrapper. Wheres the documentation? Also I went back and forth on the service boundary. My concern was where the validation layer sits. Does the existing pattern handle the case or is the split intentional? happy to pair on this if async isn't working."

The human version has typos in its soul. Imperfect. Asks a question revealing a gap in understanding. And precisely why the human version builds trust. Someone thinking about the problem rather than performing comprehension.

Here’s where I’m supposed to tell you I quietly updated my mental model of this person, started giving less feedback, and moved on. The expected pattern. What most people do.

But this colleague and I had something most professional relationships don’t. I’m several levels above him on the org chart. The kind of gap where honest feedback typically flows one direction or not at all. I’ve intentionally built bidirectional feedback dynamics with several developers on my team. Not by accident. By practice. This colleague was one of them. He’d called me out on things. I’d called him out. We’d built the kind of trust where directness across a level gap isn’t a threat. Where the hierarchy exists on paper but doesn’t govern how we talk to each other.

So I told him. Directly. Why the response was hollow. What the response missed. Why the response mattered. Not a power move. Not a gotcha. A continuation of an honest working relationship between two people who had already agreed candid feedback is how you get better.

He received the feedback well and started being more intentional with his responses and PR interactions.

About a month later, he told me he appreciated the feedback. Said he feels like he’s actually learning more now by engaging with feedback himself instead of routing responses through a model producing the appearance of engagement.

The story is real. And the story ended well.

The code review was one exchange. The pattern is everywhere. Every interaction builds or erodes a running assessment of who you are in someone else’s head. Not your resume. Not your title. Whether you’re someone worth investing in. Worth mentoring. Worth being honest with.

The sender judges polish. The recipient judges substance.

Plausible-sounding nonsense does not stop being nonsense because you signed your name to it.

The corrosion is quiet. Your manager does not pull you aside and say, “I can tell you’re using AI for your messages and investing less in your development because of the lack of effort.” Your colleague does not reply with, “Your response reads like an LLM and I’m going to stop giving you real feedback.” They just give you less. Less mentorship. Less honesty. Less of the messy, human, imperfect investment actually building careers.

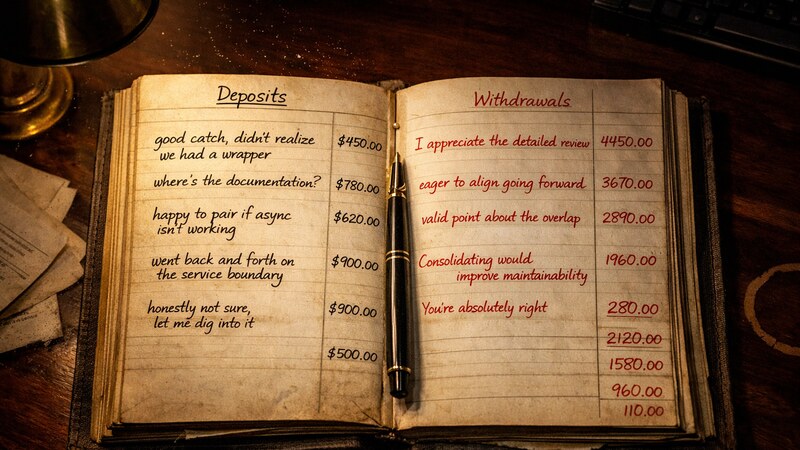

Every AI-generated message is a withdrawal from a trust account you cannot see. The balance silently declines. Nobody sends you a statement.

You’re not saving time. You’re spending credibility.

My colleague’s story ended well because we could have the conversation. Two people with enough mutual trust to be comfortable with the uncomfortable feedback.

This did not happen overnight. The bidirectional feedback culture on my team took deliberate effort to build. Not just between me and individual developers. Peer to peer. Across level gaps. The kind of environment where anyone can say “your response missed the point” without navigating politics first. Years of practice, not a policy memo.

Most teams do not have this level of maturity. Professional relationships require years of trust before directness feels safe. Up the org chart? Rarely! The conversation carries career risk nearly everyone avoids for their own self-preservation. Across peer lines without established trust? The conversation stalls out before it can even have a chance to start.

The uncomfortable truth: People sending AI-generated communication know exactly when they receive AI-generated responses. They recognize the patterns in everyone else’s messages. The structured acknowledgment, the curiosity closer, the polished emptiness. They just have not connected the recognition to their own behavior. The same patterns they spot in others are the patterns others spot in them.

Which means most people sending AI-generated communication into their professional relationships are eroding something they cannot see, cannot measure, and will not be warned about. Their colleagues already know, they are just not saying anything. Quietly recalibrating how much of themselves to invest in someone who apparently cannot be bothered to invest in return.

If nobody has had this conversation with you yet, consider why.

My colleague got lucky. Someone cared enough to say the uncomfortable part. He’s a better engineer for receiving the feedback. And I’m better for having built the kind of team where the conversation was even possible.

Not everyone has this. Maybe you do. Maybe the cost of finding out is worth the conversation.

// Pragmatic GenAI. Credibility sold separately.

You deployed probabilistic systems with deterministic playbooks. Now teams cope, not adopt. The paradigm shift you mandated but never owned. Warning: may contain traces of actual engineering.

Your exec celebrates token burn like lines of code shipped. Activity climbs, value stays flat. Time to measure outcomes, not consumption. Warning: may contain traces of actual engineering.

Your exec built it in a weekend, now wants it shipped. The gap isn't skill—it's demo privilege versus production reality. Warning: may contain traces of actual engineering.

Why pragmat.ai? Because GenAI doesn't fail from lack of ideas; it fails when prototypes rot. Real systems need engineering discipline. Warning: may contain traces of actual engineering.